Back

BackEntropy and the Second Law of Thermodynamics: Study Notes

Study Guide - Smart Notes

Entropy and the Second Law of Thermodynamics

Introduction to Entropy and the Second Law

The concept of entropy is central to understanding the Second Law of Thermodynamics, which governs the direction of thermal processes and the efficiency of heat engines and refrigerators. Entropy provides a quantitative measure of disorder and helps explain why certain processes are irreversible.

Heat Engines and the Second Law

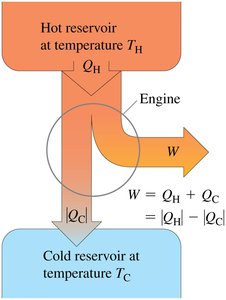

Basic Operation of Heat Engines

Heat engines operate on a cyclic process, absorbing heat QH from a hot reservoir at temperature TH, performing useful work W, and discarding some heat QC to a cold reservoir at temperature TC. This process is fundamental to the Second Law of Thermodynamics, which states that not all absorbed heat can be converted into work; some must always be expelled to a colder reservoir.

Kelvin's Statement: It is impossible for any device operating on a cycle to absorb heat from a single reservoir and produce an equivalent amount of work without expelling some heat to a colder reservoir.

Work Output:

Refrigerators and the Second Law

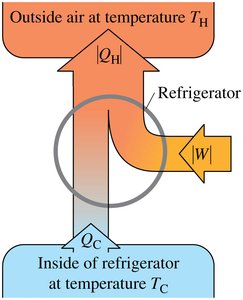

Basic Operation of Refrigerators

Refrigerators are thermal machines that transfer heat from a cold region (inside the refrigerator) to a warmer region (the room), requiring an input of mechanical work. This process is described by the Clausius statement of the Second Law.

Clausius Statement: It is impossible for any process to transfer heat from a colder body to a hotter body without external work input.

Energy Balance:

Entropy: A New State Function

Definition and Properties of Entropy

Entropy (S) is a state function introduced to provide a mathematical description of the Second Law. It quantifies the amount of energy in a system that is unavailable for doing work and is closely related to the concept of disorder.

Infinitesimal Change: For a reversible process, the infinitesimal change in entropy is given by:

State Function: Entropy depends only on the state of the system, not the path taken.

Path Independence: is determined only by the initial and final states.

Cyclic Processes: For a reversible cyclic process, .

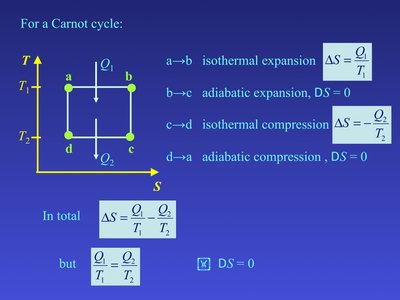

Entropy Changes in the Carnot Cycle

The Carnot cycle is a well-defined reversible process used to illustrate entropy changes. Heat is exchanged only during isothermal steps, making entropy calculations straightforward.

Isothermal Expansion ():

Adiabatic Expansion ():

Isothermal Compression ():

Adiabatic Compression ():

For the entire Carnot cycle:

Calculating Entropy Changes

General Formula for Entropy Change

The entropy change for a finite reversible process from state 1 to state 2 is:

For heating at constant pressure (isobaric):

Thus,

Integrating from to :

Example: Heating Water

Consider 1 kg of water heated from 20°C to 100°C at atmospheric pressure. The entropy change is calculated as:

J K-1

K, K

J K-1

Heat enters the system, increasing its entropy. The same entropy change results whether the process is reversible or irreversible, as entropy is a state function.

Entropy Change of the Reservoir

When the reservoir loses heat to the system, its entropy decreases:

kJ

J K-1

Total entropy change: J K-1 \(>0\)

Clausius Inequality and the Principle of Increasing Entropy

Clausius Inequality

Clausius derived a general result for entropy change in any cyclic process (reversible or irreversible):

For an isolated system, the Second Law can be stated as: No process is possible in which the total entropy decreases when all systems involved are included.

In an isolated system, entropy either increases (irreversible process) or remains constant (reversible process).

Entropy-Temperature (T-S) Diagrams

Using T-S Diagrams

Thermodynamic processes can be represented on T-S diagrams, which plot temperature (T) versus entropy (S). These diagrams are useful for visualizing reversible adiabatic (vertical lines, S constant) and isothermal (horizontal lines, T constant) processes.

Adiabatic process: , (vertical line)

Isothermal process: constant (horizontal line)

Microscopic Interpretation of Entropy

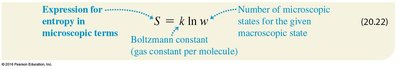

Boltzmann's Entropy Formula

On the microscopic level, entropy is related to the number of possible microstates (w) corresponding to a given macrostate. Boltzmann's formula expresses this relationship:

k: Boltzmann constant

w: Number of microstates for the macrostate

Example: Free Expansion of a Gas

When n moles of gas expand freely to double their volume at temperature T, the number of microstates increases by a factor of (N = number of molecules):

Entropy and Disorder

Physical Meaning of Entropy

Entropy is often described as a measure of disorder or randomness. Many natural processes proceed in the direction of increasing entropy, reflecting a tendency toward greater molecular disorder.

Adding heat increases molecular motion and randomness.

Free expansion of a gas increases the number of accessible microstates.

Explosions and mixing processes increase entropy and disorder.

References to Entropy in Popular Culture

Entropy and the Second Law are referenced in literature, philosophy, ecology, and even music, reflecting their broad conceptual impact beyond physics.