Back

BackTime Series Analysis and Forecasting in Econometrics: Concepts, Methods, and Applications

Study Guide - Smart Notes

Time Series Data and Forecasting

Prediction vs. Causation

In time series econometrics, it is crucial to distinguish between prediction (forecasting) and causation. Forecasting focuses on predicting future values based on past observations, while causal analysis seeks to understand the effect of one variable on another. For forecasting, the primary goal is accuracy in out-of-sample predictions, not causal interpretation of coefficients.

Types of Time Series Data Transformations

Levels: The raw values of a variable over time.

Differences (First Differences): The change in the variable from one period to the next, denoted as .

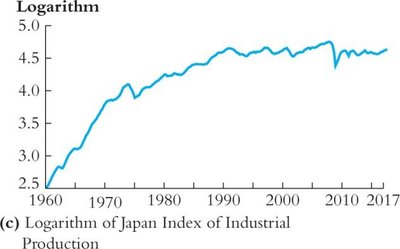

Logarithms: The natural log transformation, often used to stabilize variance and interpret growth rates.

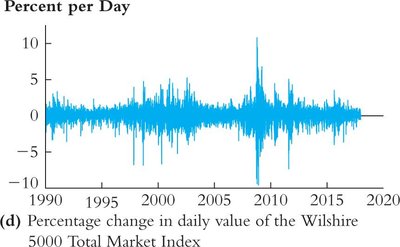

Percent Changes: The percentage change from one period to the next, often approximated by for small changes.

Changes in Logarithms: .

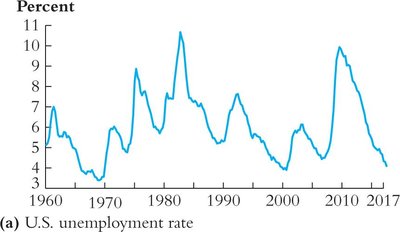

Portion of the Total: Expressing a variable as a percentage of a total (e.g., unemployment rate).

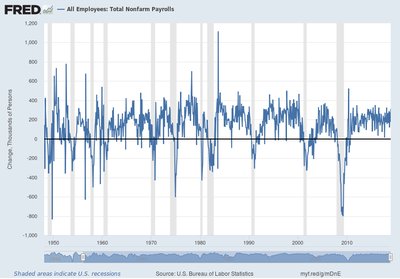

Example: Data in First Differences

The first difference transformation is commonly used to analyze changes rather than levels, especially when the data exhibit trends or nonstationarity.

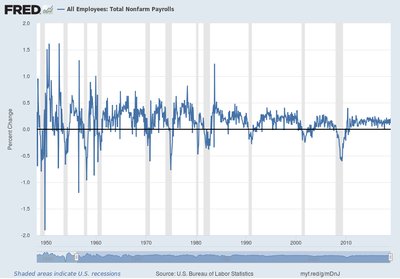

Example: Changes in Logarithms, % Change

Taking the difference of logarithms approximates the percentage change, which is useful for interpreting growth rates in economic data.

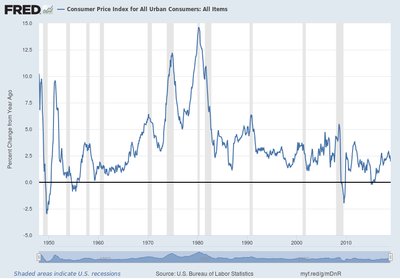

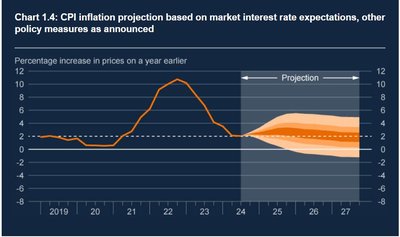

Example: Consumer Price Index (CPI) Changes

Year-over-year percent changes in the CPI are used to measure inflation.

Lagging the Data and Autocorrelation

Lags and Differences

Lag: The value of a variable from a previous period, e.g., the first lag is .

First Difference: .

First Difference of Logarithm: .

Percentage Change Approximation: .

Autocorrelation (Serial Correlation)

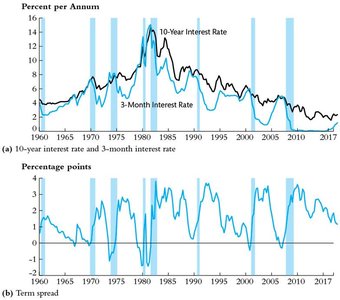

Autocorrelation measures the correlation of a time series with its own past values. It is a key concept in time series analysis, indicating the persistence of shocks over time.

First Autocovariance:

First Autocorrelation:

j-th Autocorrelation:

Forecasting Models and Stationarity

Forecasting Basics

Forecasting uses historical data to predict future values. The accuracy of forecasts depends on the similarity between the historical (in-sample) and future (out-of-sample) periods, a property known as stationarity.

Stationarity

A time series is stationary if its probability distribution does not change over time. Stationarity is essential for reliable forecasting, as it ensures that the relationships observed in the past persist into the future.

Strict Stationarity: The joint distribution of does not depend on .

Joint Stationarity: Two series and are jointly stationary if their joint distribution does not depend on .

Forecasts and Forecast Errors

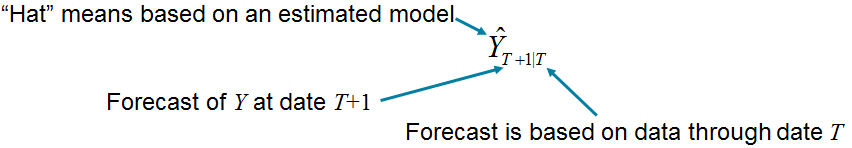

Forecast: An out-of-sample prediction, e.g., is the forecast for based on data through .

Forecast Error:

Mean Squared Forecast Error (MSFE):

Autoregressive and ADL Models

Autoregressive (AR) Models

An autoregressive model predicts a variable using its own past values. The order of the model, , indicates how many lags are included.

AR(1) Model:

AR(p) Model:

Autoregressive Distributed Lag (ADL) Models

The ADL model extends the AR model by including lagged values of other predictors (X variables):

ADL(p, q) Model:

Model Selection and Forecast Evaluation

Information Criteria for Lag Selection

Choosing the appropriate lag length is crucial for model performance. Two common criteria are:

Bayesian Information Criterion (BIC):

Akaike Information Criterion (AIC):

BIC penalizes model complexity more heavily than AIC, often resulting in more parsimonious models.

Forecast Intervals and Uncertainty

Root Mean Squared Forecast Error (RMSFE):

Forecast Interval (95%):

Nonstationarity: Trends and Structural Breaks

Trends in Time Series Data

A trend is a persistent, long-term movement in a time series. Trends can be deterministic (e.g., linear) or stochastic (random walk).

Deterministic Trend:

Stochastic Trend (Random Walk):

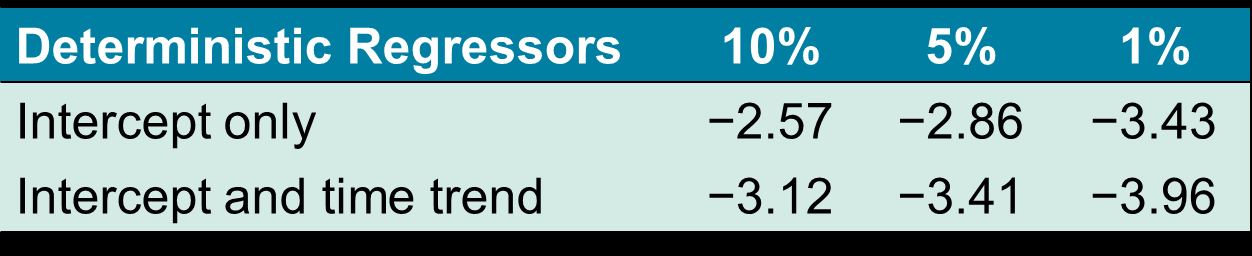

Unit Roots and the Dickey-Fuller Test

A unit root in an AR(1) model () implies a stochastic trend. The Dickey-Fuller test is used to test for unit roots:

DF Test Regression:

Null Hypothesis: (unit root, nonstationary)

Alternative Hypothesis: (stationary)

Structural Breaks and Model Stability

Testing for Structural Breaks

Structural breaks occur when the relationship between variables changes over time. The Chow test (for known break dates) and the Quandt Likelihood Ratio (QLR) test (for unknown break dates) are used to detect such breaks.

Chow Test: Tests for parameter stability at a known break date.

QLR Test: Maximum of Chow F-statistics over a range of possible break dates.

Pseudo Out-of-Sample (POOS) Forecasting

POOS forecasting simulates real-time forecasting by re-estimating the model as new data become available and comparing forecasts to actual outcomes. This method is useful for assessing model stability, especially at the end of the sample.

Summary Table: Key Concepts and Formulas

Concept | Formula / Description |

|---|---|

First Difference | |

Log Difference (Approx. % Change) | |

AR(1) Model | |

ADL(p, q) Model | |

Forecast Error | |

MSFE | |

RMSFE | |

BIC | |

AIC |