Making the switch to a completely flipped classroom

Bill D. Richards

Geology/Geography Instructor, North Idaho College

What possessed me? What possessed me to pull-the-trigger and switch to a 100% completely flipped in-class experience from a traditional, although excellently delivered, lecture model? As with many of my geoscience education colleagues, I was looking for methods to increase student success without compromising content or assessment rigor. The motivation to take that final step, or leap, came at the close of the spring 2014 semester when I experienced an undeniable increase in exam averages over previous semesters, with just one three-week trial of Learning Catalytics from Pearson.

For both of the freshmen geoscience courses I taught last spring, Physical Geology and Physical Geography, I decided to give the "flipping" experiment a try for the last three and a half weeks of the semester (one major exam’s worth of material – the fourth of the semester). There was an outstanding 13.5% increase in exam averages over the corresponding exam in previous semesters! Further, students’ written comments on end-of-semester evaluations noted the desire to have those experiences the entire semester and not just part-time, but 100% of the class time.

The percent of the class scoring 80% or better increased noticeably between the semester without Learning Catalytics and the current semester with LC.

The decision was, therefore, to "take-the-leap" and convert the entire PowerPoint-based lecture model to a "flipped" model utilizing Learning Catalytics. The craziness and pitfalls of such a decision can be presented another time, but for now, I would like to present the results of the success I have had, controlling for as many variables as possible.

The spring 2014 and fall 2014 semesters are compared because both semesters required students in the compared sections to complete the same MasteringGeology homework assignments, both semesters utilized the same assessment criteria on each of the exams (exam copies were not returned to students, but retained in my office files), and both semesters utilized the same edition of the Earth textbook by Tarbuck and Lutgens. Only the first three exams of the semester are compared because the fourth exam of spring 2014 included the use of the flipped model.

The most telling metric, I feel, is the distribution of scores for the average of the three exams. As shown in Table 1, the percent of the class scoring 80% or better increased noticeably between the semester without Learning Catalytics (LC) and the current semester with LC, increasing from 21% of the students to 32% of the students. As important, the percent of the class scoring less than 70% decreased from 50% of the students to 36% of the students. This result indicates the flipped model may serve to push some "B" students into the "A" range, push some "C" students into the "B" range, and decrease the overall number of "D" and "F" students.

Another comparison (Table 2) shows the three-exam average score for each quartile of students enjoyed a consistent increase of about 3% for the flipped class over the non-flipped class.

Table 1: Score Distributions (% of class in each grade group)

| Overall Exam Grade Average | Spring 2014 (without LC) | Fall 2014 (with LC) |

| >= 80% | 21.0% | 32.0% |

| 70.0 - 79.5 | 32.0% | 32.0% |

| 60.0 - 69.5 | 32.0% | 24.0% |

| <= 59.5 | 18.0% | 12.0% |

Table 2: Overall Exam Averages (Exams 1, 2, & 3)

| Spring 2014 (without LC) | Fall 2014 (with LC) | |

| upp quartile | 82.5% | 85.9% |

| 2nd quartile | 75.1% | 78.3% |

| 3rd quartile | 67.2% | 69.9% |

| bottom quartile | 55.6% | 58.9% |

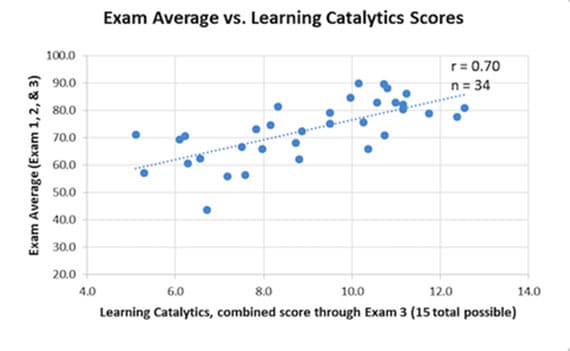

Although not itself a direct indicator of improved success for one semester over another, the correlation between scores from in-class engagement activities and the three-exam average is easily observed in the graph (Figure 1). It is clear from the graph that being prepared with the out-of-class content/assignments, resulting in students performing better during in-class engagement with LC, correlates well with higher exam averages. Students in the Fall 2014 semester were not shown this correlation result; however, this result will be explicitly shared with students at the start of the Spring 2015 semester and future semesters, the hope being that less "self-regulated" students will make behavior modifications to improve success.

A final metric to examine involves how often, and with what timing, students re-visit the in-class session online for review prior to exams. Since Learning Catalytics is cloud-based, each in-class session can be made available to students for re-working, review, or practice after class time. Unfortunately, since this metric is not yet tracked by Learning Catalytics, I will rely on students self-reporting this data in the end-of-semester survey. This may provide another dimension to understand how this particular flipped model helps improve student success.

In conclusion, even though changing to the flipped model of instruction was a significant investment in time and a significant shift in classroom operational modality, an improved rate of student success does seem to exist. The modifications and adjustments that may be made for future semesters, as well as an examination of the content delivery and in-class engagement components actually used can be the subject of a more in-depth presentation in the near future.